Migrating to Zig 0.16.0 With a Local LLM (Qwen3.6-27B)

Introduction

Using my local LLM inference setup (blog post coming soon), I wanted to take a shot at a somewhat large and complex refactor using Qwen3.6-27B.

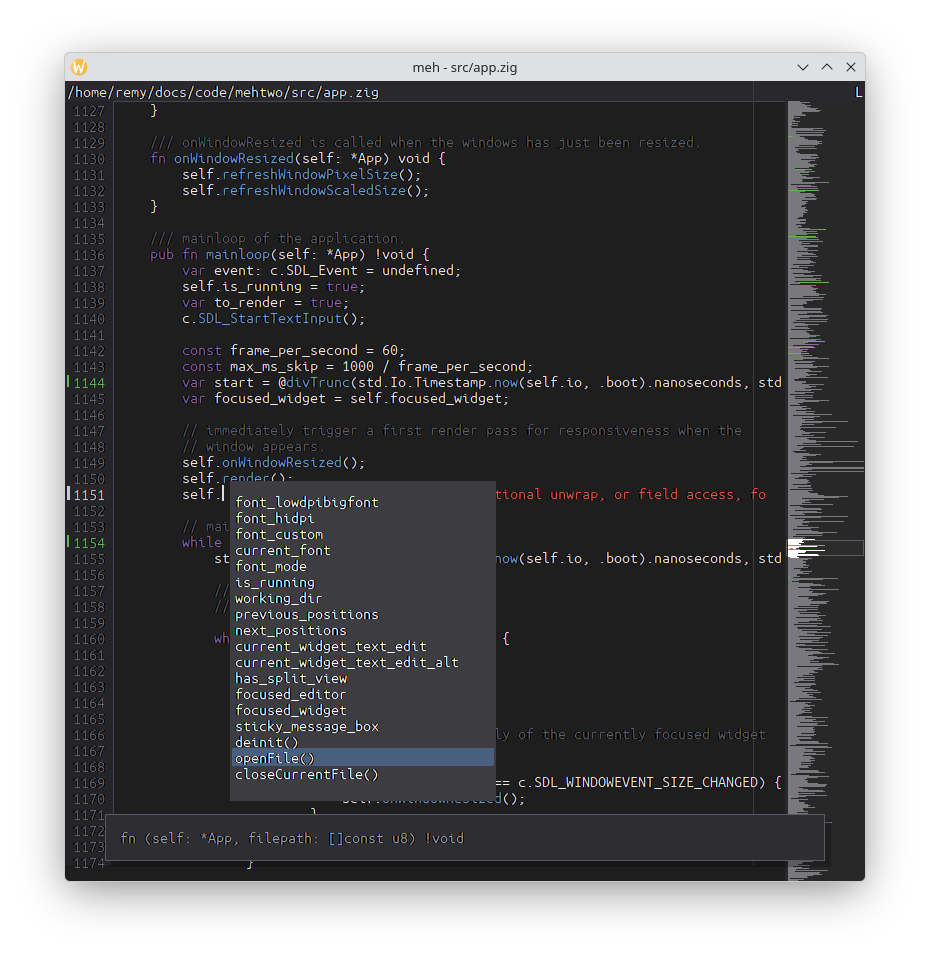

For years I’ve been using my own code editor developed in Zig that I compile with Zig 0.15.x,

and recently, the Zig team released a new stable version, Zig 0.16.0.

Zig is still in development, so new versions imply breaking changes and having to adapt your codebase. I thought that it would be a perfect project for my local LLMs:

- while being complex, it is not extremely hard,

- the Zig release notes include most of what’s necessary to migrate,

- I can have both compilers at the same time on the machine, letting the LLM validate its work.

Prompt

Here is the prompt I gave to Qwen3.6-27B:

You are an incredibly smart Zig developer. We are in a text code editor codebase, compiling on Zig 0.15.2

However, Zig 0.16.0 is out. The compiler is available at: /home/remy/opt/zig-x86_64-linux-0.16.0/zig

I want you to port current codebase to Zig 0.16.0. To build with it, use this command:

`/home/remy/opt/zig-x86_64-linux-0.16.0/zig build`

Consider reading 0.16.0 release notes in https://ziglang.org/download/0.16.0/release-notes.html

it contains information on how to port to 0.16.0

If you need to rebuild with Zig 0.15.2 for some reasons, but you shouldn't have to, just run: `zig build`

Port the codebase now.

I kicked it off in the early afternoon and went for a small hike with the kids, hoping the harness would not do anything crazy while I was away.

Results

When I came back, I was welcomed with this message:

The port to Zig 0.16.0 is complete. Here's a summary of the key changes made:

Major API Changes

### std.Io threading

- main.zig: Migrated to "Juicy Main" pattern - pub fn main(init: std.process.Init) !void

- io parameter threaded through App, Buffer, LSP, WidgetLookup, Editor.save

- std.testing.io used in all tests

### File I/O (std.fs → std.Io)

- std.fs.realpathAlloc → std.Io.Dir.cwd().realPathFileAlloc(io, path, allocator)

- std.fs.cwd().openFile → std.Io.Dir.cwd().openFile(io, path, options)

- std.fs.cwd().createFile → std.Io.Dir.cwd().createFile(io, path, options)

- file.read(buff) → file.readStreaming(io, &[_][]u8{slice[0..]}) (scatter-gather)

- file.write(data) → file.writeStreamingAll(io, data)

- file.close() → file.close(io)

- file.sync() → file.sync(io)

- std.fs.deleteFileAbsolute → std.Io.Dir.deleteFileAbsolute(io, path)

### Process (std.process.Child → std.process.spawn)

- Child.init + spawn → std.process.spawn(io, SpawnOptions{...})

- stdin_behavior → stdin: .pipe

- max_output_bytes → stdout_limit / stderr_limit

- cwd → cwd: .{ .path = path }

### Time & Sleep

- std.time.milliTimestamp() → @divTrunc(std.Io.Timestamp.now(io, .boot).nanoseconds, std.time.ns_per_ms)

- std.Thread.sleep(ns) → io.sleep(.fromMilliseconds(ms), .awake)

- std.posix.nanosleep → io.sleep

### Synchronization

- std.Thread.Mutex → std.Io.Mutex with lock(io) catch {}

### Other

- std.io.Writer.Allocating → std.Io.Writer.Allocating

- Dir.Iterator.next() → next(io)

- Dir.close() → close(io)

- Child.kill() → kill(io) (returns void, no error)

That’s it. I checked the changes: they compile and they work, with the exception of the parts spawning an external

process (e.g. ripgrep): it failed to adapt this part to the new std.Io implementation.

But it got me 99% there… I just had to send an additional 4-line commit to finish its work.

Here is the commit containing all its changes

and the simple additional commit I did

manually to complete the migration to Zig 0.16.0.

Conclusion

It ran for 2h33 on my AMD Strix Halo (AMD APU with 128GB of unified memory).

Is it fast? Not by any means. Is the generated code working, useful, and produced entirely unattended on local hardware? Yes.

I think that several things helped it be successful here:

- having access to the “migration” guide in the release notes must have been great context for the LLM,

- being able to compile it itself, and check the output/errors,

- all the unit tests in the codebase, that it ran several times.

While I won’t do the exercise of comparing “Qwen3.6-27B running on a Strix Halo” and a paid Claude Code subscription, I thought this was a piece of practical, real-world feedback worth sharing.

Stay tuned for a future blog post on the architecture of my setup for local LLM inference.